AI in Pharma: Faster Molecule-to-Market With Fewer Trial Delays

AI in pharma is pursued to cut cycle time, reduce cost, and raise decision quality without weakening compliance.

AI in pharma is pursued to cut cycle time, reduce cost, and raise decision quality without weakening compliance. The strongest gains come when AI is embedded in the value chain and translates data into actions across R&D, trials, operations, safety, and security.

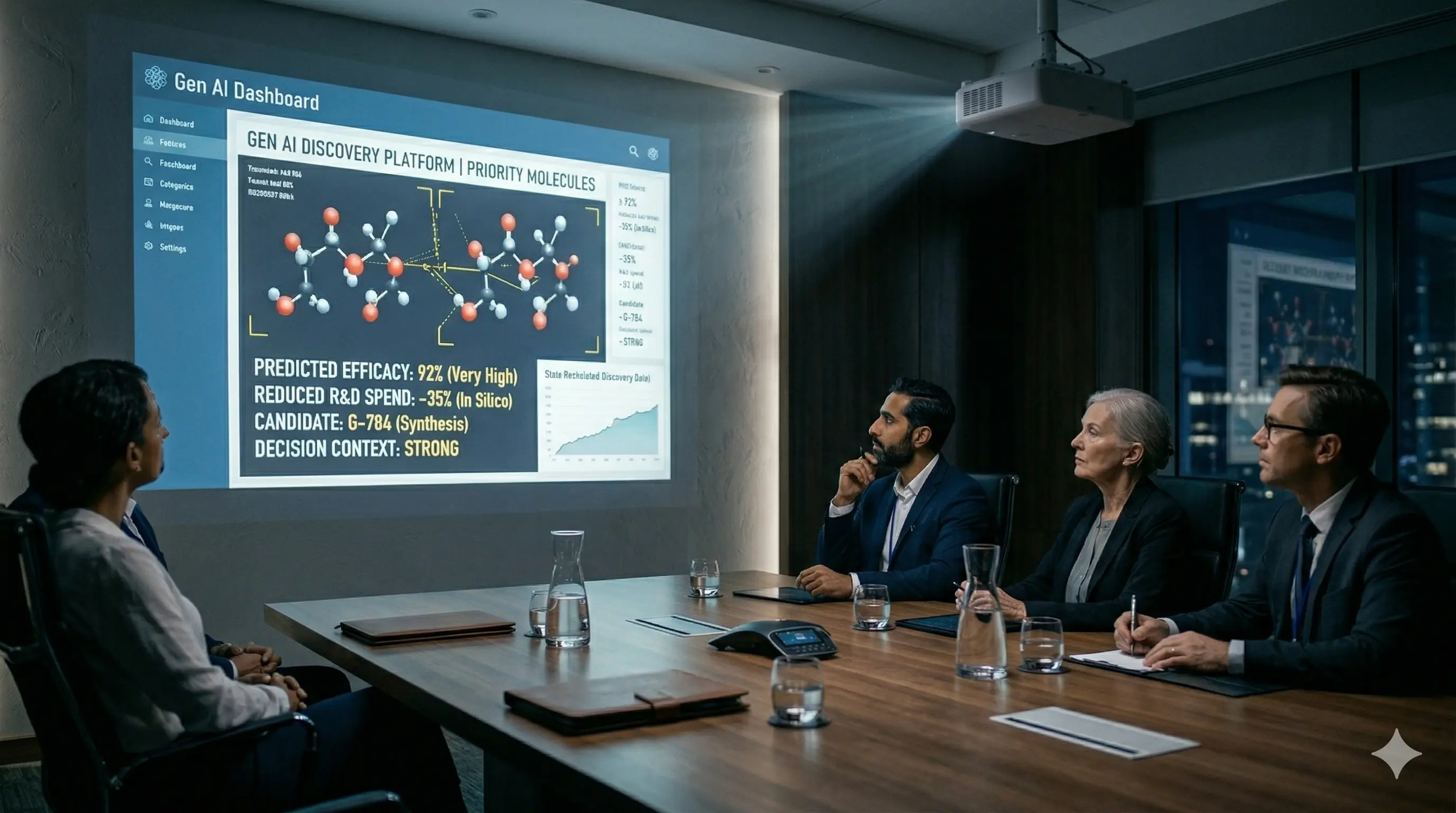

- Target and compound prioritization speed up early research decisions by ranking pathways, targets, and lead candidates before wet-lab spend scales

- Eligibility identification and risk monitoring reduce clinical development friction by matching participants faster and surfacing site and data risks early

- Predictive quality signals and maintenance analytics improve manufacturing stability by flagging drift, deviations, and equipment failure patterns before downtime hits

- Safety intake and case prioritization moves faster by triaging signals and prioritizing reviews under documented, traceable oversight

- Threat detection and response automation strengthens cyber resilience by spotting anomalous behavior across partners, platforms, and data pipelines sooner

Assign owners, set baselines, and make adoption non-optional. Measure progress through:

- Recruitment speed — time from screening to randomization

- Query aging — days to close critical data queries

- Manufacturing downtime — hours lost to unplanned stops

- Pharmacovigilance triage time — time to prioritize new safety cases

AI Use Cases in Pharma — Where AI Creates the Most Value (R&D, Trials, Operations)

- Operations delivers the biggest lift because production, materials, and logistics sit on the widest cost base, and early drift signals prevent deviations, scrap, and rework. To evaluate comparable AI solutions for pharma, map each use case to a specific workflow owner and KPI baseline before scaling.

- Planning improves when demand, inventory, and shipment telemetry updates forecasts continuously, reducing stockouts and expiry waste

- Clinical development gains come from removing enrollment friction, where eligibility matching speeds site activation and keeps screening momentum

- Data operations tighten as outliers and missing artifacts are spotted mid-study, shrinking query aging and lowering avoidable protocol churn

- R&D benefits upstream as multi-omic and literature signals rank targets and compounds, concentrating wet-lab effort on higher probability paths

- Commercial performance improves as segmentation and next-best-action models steer outreach and content toward responders, limiting low-yield touches

- Enabling teams control velocity by standardizing deployment, monitoring, and review steps so models remain usable under compliance expectations across functions without rebuild cycles

Clinical Trials: Cut Delays With AI Across Recruitment, Design, and Data Review

- Recruitment improves when structured criteria and EHR signals identify eligible participants earlier, reduce manual chart review, and support diversity targets without slowing sites

- Feasibility and design tighten as real-world data highlights responder subgroups, refines inclusion rules, and reduces avoidable amendments that stall execution

- Data review accelerates when models flag anomalies, missing forms, and outlier labs during the study, prioritizing queries and preventing rework at closeout

By compressing these handoffs, teams reduce the waiting work that drives cycle time, stabilize study quality, and keep decisions traceable through defined human review for high-impact changes.

The Hidden Blocker: Data Readiness and Interoperability (EDC/CTMS/eTMF + RWD)

AI stalls when trial and real-world data cannot move cleanly across systems. Interoperability comes first.

- Align identifiers, vocabularies, and metadata across EDC, CTMS, and eTMF to remove manual reconciliation and duplicate records.

- Connect RWD and EHR inputs through governed access, consent, and de-identification, with consistent definitions mapped to study criteria.

- Maintain end-to-end lineage and attach source citations to every generated output, enabling traceability for QA review and inspection response.

A stable data layer keeps automation reliable across analytics, safety, and reporting at scale.

Reg-Ready AI: Audit Trails, Human-in-the-Loop, and Model Monitoring

Controls built upfront keep AI usable in GxP and patient-safety workflows and prevent late QA blocks.

- Human-in-the-loop gates high-impact actions such as eligibility flags, protocol changes, batch disposition support, and safety triage, with role-based approvals.

- Explainability and traceability tie each output to source records, model versions, prompts, and decision rules through audit trails and retention.

- Drift and bias monitoring runs continuously across sites and populations, with thresholds that pause automation, trigger review, and log corrective actions.

This keeps decisions defensible across inspections and internal quality reviews.

From PoC to Scale: A 3-Step Industrialization Playbook

Scaling AI requires an operating model built for delivery, not isolated prototypes.

- Build a hybrid delivery team that pairs product ownership with engineering, data, quality, and the right partners, using shared standards for security and validation.

- Create an incubation path that turns ideas into reusable assets, with common data products, testing harnesses, release checklists, and approved patterns for regulated workflows.

- Drive adoption through role-based rollout, training tied to workflow changes, and KPI ownership at function level, with feedback loops that retire low-value use cases.

This approach converts pilots into repeatable execution across the value chain.

Cost-to-Value Guardrails: Where AI Pays Back (and Where It Burns Budget)

Cost overruns come from automating low-signal work and underestimating integration and inference. Protect ROI with two guardrails:

- Start with high-volume decisions where small accuracy gains compound, such as eligibility screening, query prioritization, deviation detection, and safety intake triage.

- Set unit-cost thresholds per workflow, tracking cost per case, cost per query resolved, or cost per batch supported, and stop automation when thresholds are exceeded.

FAQs

How do we avoid AI getting stuck at a pilot?

Treat it as a regulated workflow. Choose one use case, lock EDC/CTMS/eTMF/RWD data flow, set approvers, ship audit logs and change control.

How do we prevent agentic AI compliance risk or runaway cost?

Limit agents to bounded tasks. Require human approval for high-impact actions. Track cost per case/query/batch, pause expansion when unit costs rise.

What outcomes should leadership expect that QA and Finance accept?

Expect KPI movement across the chain: faster enrollment, shorter query aging, fewer deviations and downtime, quicker PV triage. Report baselines, owners, evidence.

How do we keep AI inspection-ready in GxP and safety workflows?

Build evidence by design: audit trails, source traceability, versioning, retention. Gate critical decisions with HITL. Monitor drift and bias with stop-and-review triggers.

What data foundation is required to scale AI across pharma systems?

Standardize identifiers and metadata across EDC/CTMS/eTMF. Govern RWD/EHR linkage and definitions. Maintain lineage and source basis for every output.

%20(7).avif)

%20(5).avif)

.avif)